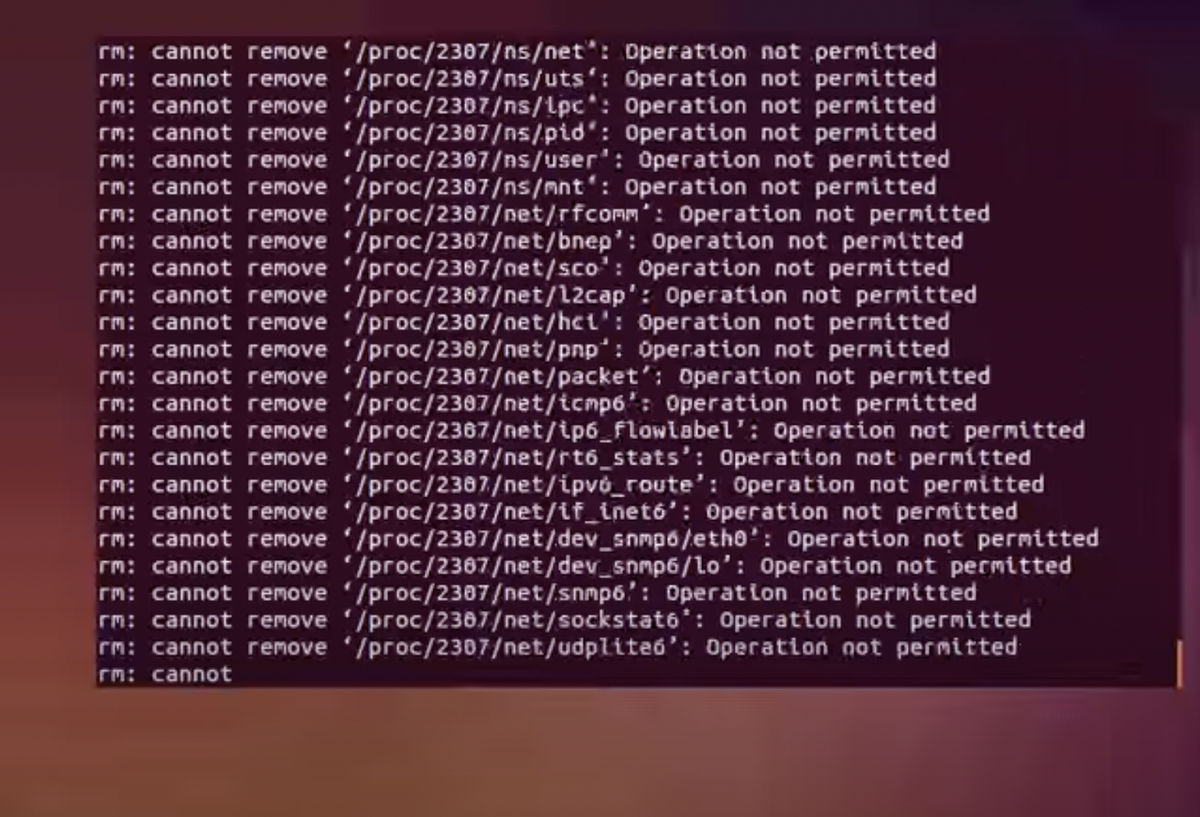

$ sudo rm -rf / === npm install

In what seems like a long time ago, in part because it is, I learned the catastrophic capabilities of this command the hard way, and I'm sure folks my vintage have similar stories as it's essentially a right of passage in the sysadmin world. Back in 1996, I was 14 and whilst the internet has fundamentally changed over the years, one thing has not - the internet is still a dangerous place filled with bad actors.

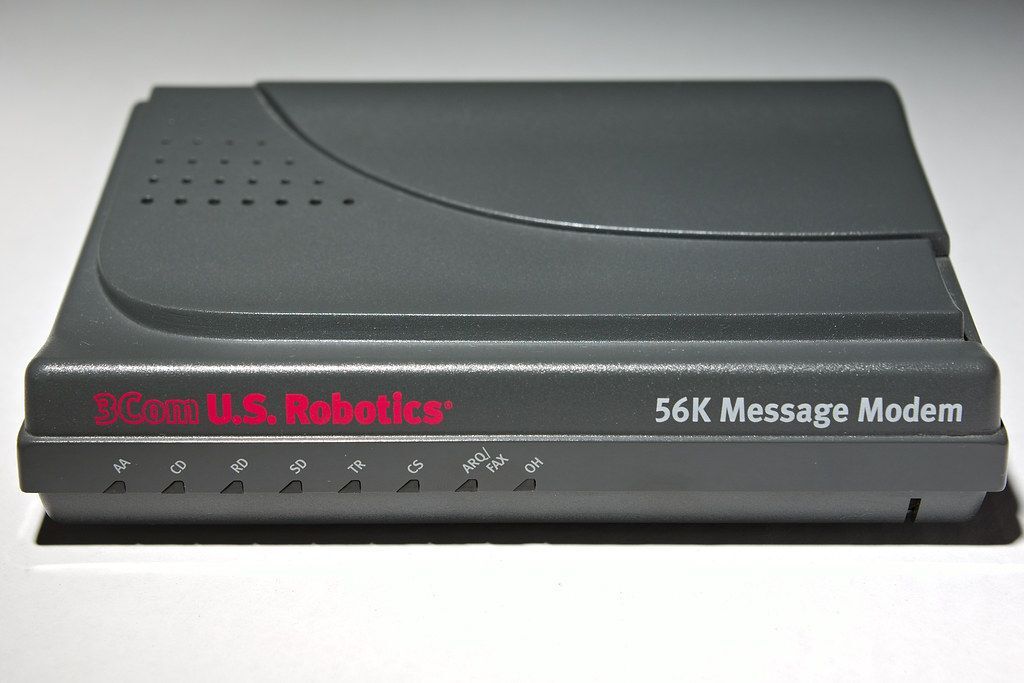

I had spent hours learning how to install Slackware onto my fathers Cyrix 6x86 computer and days learning how to configure the PPP daemon to connect the computer up to IBM dot NET via an US Robotics 56K V.92 modem when life served me a lesson.

At the bottom of the installation guide I was following there were recommendations to join an internet relay chat room for newbies on EFNet (ahoy!) so I did exactly that.

As a member of the generation that is the Eternal September (ie. complete unawareness of pre 1993 internet etiquette) I launched right into asking my first question without saying hello. The interaction went down something like this:

me: hey, how can I do $x?

random: $ sudo rm -rf /

Being 14 and completely oblivious, I ran the command as root. Poof, days of work down the drain and one important lesson was learned:

Don't trust instructions from random people on the internet

Unfortunately, not much has changed in the last 25 years. The command is dangerous and you should absolutely not run it on your local computer yet, that's what nearly every software developer is risking multiple times a day when they consume a random repository from GitHub on their local computer.

Please take a look at my RFC for making install scripts *opt in* (and thus OFF by default) on @npmjs: https://t.co/DEwEZAUGYo, to hopefully lessen the attack surface that compromised packages can take advantage of in the future, like what we saw today: https://t.co/JF1drpIsdf

— Francisco Tolmasky (@tolmasky) November 5, 2021

25 years have gone by since I discovered that consuming instructions from the internet (be that from a person, or from open-source software) might result in a computer needing to be reinstalled yet fundamentally not much has changed in our industry to address this problem even though the consumption of software created by complete internet randoms has skyrocketed.

Ooof - software supply chain breaches are up 650% over the past couple years. (Linux Foundation Membership summit keynote data point).

— Tim Banks stands 5 feet, 8 inches (@elchefe) November 2, 2021

Anyway to make the problem real, two weeks ago, for approximately 4 hours a widely utilized NPM package, ua-parser-js, was embedded with a malicious script intended to install a coinminer, harvest user/credential information and to compromise developer workstations.

Gitpod (where I work) was one of the many companies that consumed us-parser-js as a transitive dependency. As soon as news broke, we audited the Gitpod infrastructure (git rev-list --all | xargs git grep "ua-parser-js@" | cut -d@ -f2 | uniq) to determine if an infected version was in use (it wasn't) and as-is best practice we rotated the application development credentials of our engineers on the off chance someone did any work with yarn.lock during this period.

Because Gitpod builds Gitpod with Gitpod, no packages or dependencies are downloaded onto our engineers devices' which contains this class of security incident and inhibits malicious actors pivoting towards completely compromising workstations of our employees.

Cool huh?

This is just one of the reasons why I think by 2023 working with ephemeral cloud-based dev environments will be the standard. Just like CI/CD is today.

Honestly, I would not be suprised by 2030 if insurance companies made the usage of ephemeral sandboxes (in whatever form: be that cloud, OCI, or firecracker) a condition of issuing cyber insurance. In this distributed world where remote development is now a norm moving towards ephemeral sandboxes is an important lever to counter the increasing threat of source integrity and supply chain attacks.

On that topic, I personally believe consumers of open-source software need to make adjustments to their workflow in order to achieve supply chain security. There's unfortunately a push happening broadly across our industry right now that roughly translates "maintainers need do all this additional labor to make open-source easier to consume by billion dollar companies" (eg. maturity ladders) and I think that's wrong.

The thing about open-source software that’s too often forgotten, it’s AS-IS, no exceptions. There is absolutely no SLA. That detail is right there in the license! In business terms, open-source maintainers are unpaid and unsecured vendors.

The pushes for supply chain security are admirable but, personally, I think the industry needs to also invest in making developer tooling that prioritizes reproducible builds and making source/binary substitution more accessible so that consumers don't need to consume mystery binary packages with questionable contents in the first place.

$ rm -rf /

— geoff.ayb 👋 🇵🇹 (@GeoffreyHuntley) November 9, 2021

✍️ “In what seems like a long time ago, in part because it is, I learned the catastrophic capabilities of this command the hard way & I'm sure folks my vintage have similar stories as it's essentially a right of passage in the sysadmin world”https://t.co/5GfYv3KDvs